AI Token Economics

What Jensen Huang Is Really Building

At this year’s GTC conference, Jensen Huang spent about ninety seconds on something that had nothing to do with chips. He proposed that every Nvidia engineer receive an annual “inference budget,” a token allocation worth roughly half their base salary, as a standard part of compensation. An engineer making $300,000 would get another $150,000 in AI compute credits on top of that. He called it a recruiting tool and predicted it would become normal across Silicon Valley.

The tech press covered this as a compensation story. It’s not. It’s a pricing story, and the implications extend well beyond recruiting. Whether by deliberate strategy or the natural instincts of a CEO whose company benefits from ever-growing token demand, Jensen’s move has the structural effect of seeding how AI gets priced, purchased, and valued across the economy. It touches the cost recovery crisis facing every major AI company, the inevitable shift from subscription pricing to metered billing, the competitive dynamics that will determine which companies capture margin in the AI value chain, and the commodity market frameworks that could ultimately govern all of it. Understanding the connections between these threads reveals something important about where the industry is headed and where the money will flow.

The Cost Recovery Crisis

The AI industry’s cost/revenue mismatch is well documented at this point. OpenAI projects $17 billion in cash burn for 2026 against roughly $25 billion in annualized revenue, and doesn’t expect to turn cash-flow positive until 2030. Anthropic is spending approximately $19 billion on model training and inference this year against roughly equivalent revenue. One independent analysis found that a single user on a $20/month AI subscription can generate up to $163 in actual compute costs. Sam Altman has acknowledged publicly that OpenAI loses money on its $200/month ChatGPT Pro tier because usage far exceeded projections.

The all-you-can-eat subscription model that attracted hundreds of millions of users is structurally broken. Every heavy user is a net loss. The obvious fix is to charge more or to charge differently. But “we’re raising prices” is a growth killer. Users revolt. Competitors undercut.

What the industry needs is a way to transition from service-based pricing (pay for access) to consumption-based pricing (pay per token) without triggering a backlash. That transition requires the market to accept that tokens have intrinsic value, that they are worth something in and of themselves, not just as a byproduct of a service you subscribe to.

Jensen’s GTC announcement has the effect of building exactly that acceptance.

What Jensen’s Moves Set in Motion

Consider the mechanics. When Nvidia assigns $150,000 in token value to an engineer’s compensation package, several things happen simultaneously, whether or not they were planned as a coordinated strategy.

A dollar figure gets attached to a quantity of tokens. This is an anchoring event. It doesn’t matter that Nvidia’s internal cost to provision those tokens on its next-generation Vera Rubin hardware is a fraction of the nominal value. The number is in the offer letter. The market absorbs it.

Token consumption becomes a performance metric, not a cost center. On the All-In Pod, Jensen said that if a $500,000 engineer consumed only $5,000 worth of tokens in a year, he’d “go ape.” Under-consumption, not over-spending, is the organizational failure. That inverts decades of enterprise cost management thinking.

And the structural detail most coverage missed: the entity assigning dollar value to tokens is not an AI service provider. It’s the chip supplier. Nvidia doesn’t sell AI services. Nvidia manufactures the hardware that makes AI services possible. The company that builds the means of production is defining the unit of account for the downstream market.

Jensen was explicit about the framing. “Tokens are the new commodity,” he said from the GTC stage. “And like all commodities, once it reaches inflection and matures, it will segment into different parts.”

That language carries specific economic connotations about markets, pricing, and fungibility. Whatever else it is, it isn’t accidental. He’s describing tokens not as a product feature but as a tradeable resource with market dynamics.

Why Commoditization Solves the Pricing Problem

The commodity framing is more apt than it first appears, but it has limits worth understanding.

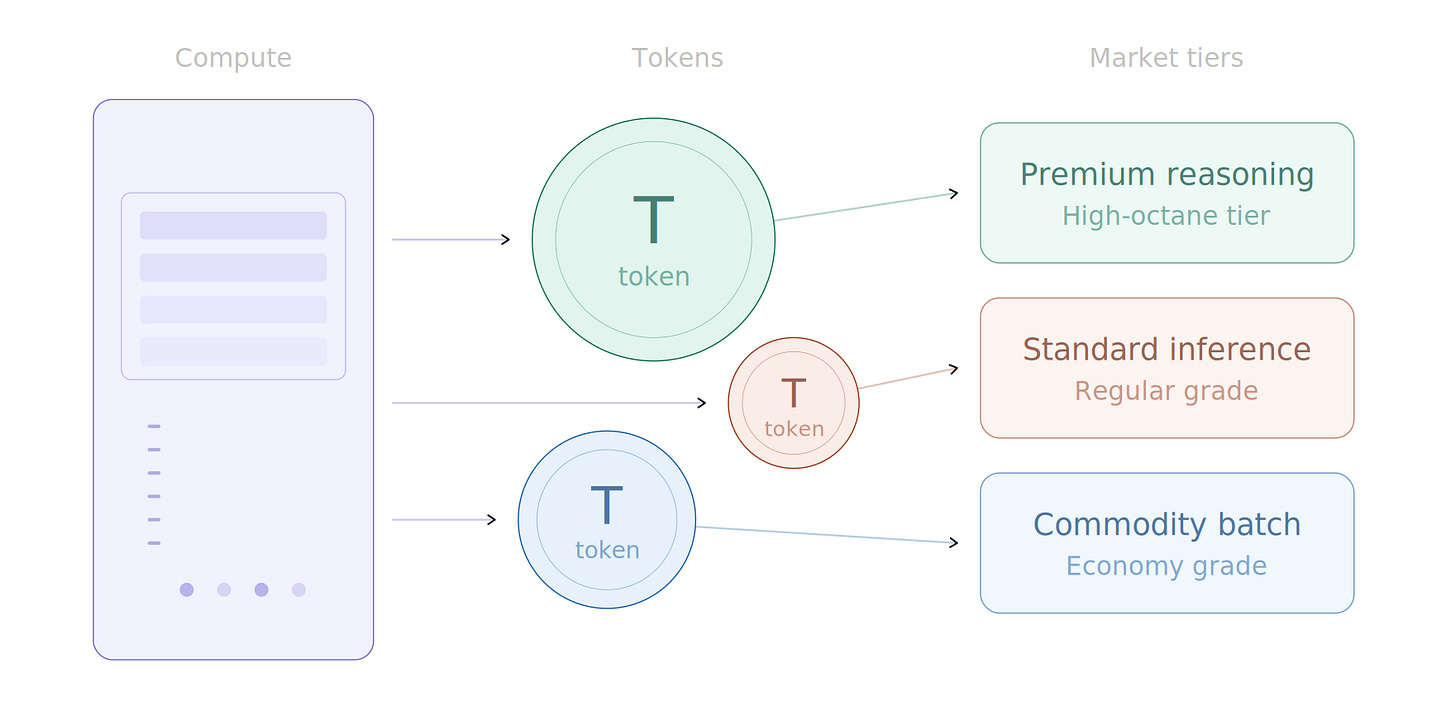

The compute infrastructure that produces tokens (GPU cycles, electricity, data center capacity) is fungible at the base layer, the same way crude oil is fungible regardless of where it was extracted. Tokens are the refined product. Like gasoline, they come in grades: regular inference, premium reasoning, high-octane multimodal. And like gasoline, what ultimately matters to the end user is the experience in the vehicle, not the molecular composition of the fuel. A prompt entered into Claude, GPT, or Gemini draws on the same underlying resource base. The differentiation is in the refinement and the engine it powers.

Today, each AI provider sets its own token pricing unilaterally. OpenAI charges one rate, Anthropic charges another, Google charges another. When a company raises prices, it owns that decision publicly. It’s an announcement, something users react to with their wallets.

But if a “market price” for tokens emerges, something analogous to West Texas Intermediate crude for oil, the provider’s relationship to pricing changes fundamentally. It becomes a pass-through rather than a price-setter. “Token prices are what they are.” The same way airlines point to jet fuel costs, or utilities point to wholesale electricity rates. The company is no longer raising prices. The market is.

The gasoline analogy is instructive right now. Pump prices in the U.S. are elevated because of supply disruptions connected to the conflict in Iran, even though the United States is a net oil exporter and the physical supply chain between crude extraction and retail delivery spans months. Once a commodity pricing framework exists, spot prices respond to narrative, to perceived scarcity, to geopolitical signals, and to speculative positioning as much as they respond to physical supply and production cost. The abstraction layer between cost and price is where margin lives.

The same dynamic operates in electricity markets. Wholesale electricity pricing creates a layer of opacity that incumbent utilities use to justify rate structures to regulators and ratepayers. The actual cost of generating a kilowatt-hour at a given moment and the price a consumer pays for it are connected, but loosely, and the gap between them is defended by institutional complexity and information asymmetry.

AI tokens are being positioned to follow the same path. A standardized commodity framework doesn’t just enable price discovery. It gives providers cover to maintain prices even as their production costs fall.

But commodity markets have a second, less convenient property: over time, they tend to drive prices toward the cost of production. Cloud compute commoditized over the past fifteen years, and the result was relentless price compression. Token costs have already fallen 99% in three years. And unlike oil, where supply is geologically constrained and OPEC can credibly restrict production, compute supply is an engineering problem currently being addressed with hundreds of billions in capex. Open-source models from Meta, DeepSeek, and Mistral add further deflationary pressure by letting enterprises produce tokens on their own infrastructure.

This means the window for establishing commodity pricing norms is now, while GPU supply is tight, data center capacity is constrained, and demand is surging ahead of buildout. If the framework isn’t normalized before supply catches up with demand, the pricing leverage disappears. That makes Jensen’s timing more interesting, not less.

Demand, Friction, and the Efficiency Race

Jensen’s move isn’t just about pricing. It’s also about demand creation. His message to the industry is: if your engineers aren’t consuming vast quantities of tokens, your organization is falling behind. Enterprises will begin budgeting for token consumption the way they budget for SaaS licenses today, except with less price sensitivity, because under-consumption will be the visible failure mode rather than over-spending.

But an honest tension lives inside this transition. The all-you-can-eat model is dying because its economics are impossible. The replacement is metered, per-token billing. Metered billing means users have to think about whether the next prompt is worth the cost. That introduces friction. Friction reduces usage.

The competitive response to this friction will look familiar to anyone who has shopped for a car. Just as miles-per-gallon ratings became a way for automakers to differentiate on the value/quality continuum, AI providers will likely begin making token efficiency claims. “Our model answers this class of question in 40% fewer tokens than the competition.” “Our reasoning engine produces superior results with a smaller context window.” Efficiency becomes a brand attribute. The model that delivers the best output per token spent earns the loyalty of cost-conscious users, the same way a fuel-efficient car earns the loyalty of drivers who watch gas prices.

This creates a healthy competitive pressure that partially offsets the friction problem. If providers compete on tokens-per-outcome rather than just raw capability, the effective cost to the user can fall even if the nominal price per token holds steady or rises. The commodity market for tokens thus creates incentives for efficiency at the model layer, while simultaneously enabling price discipline at the infrastructure layer.

The Value Chain

Not all positions in the emerging token economy carry the same structural advantage.

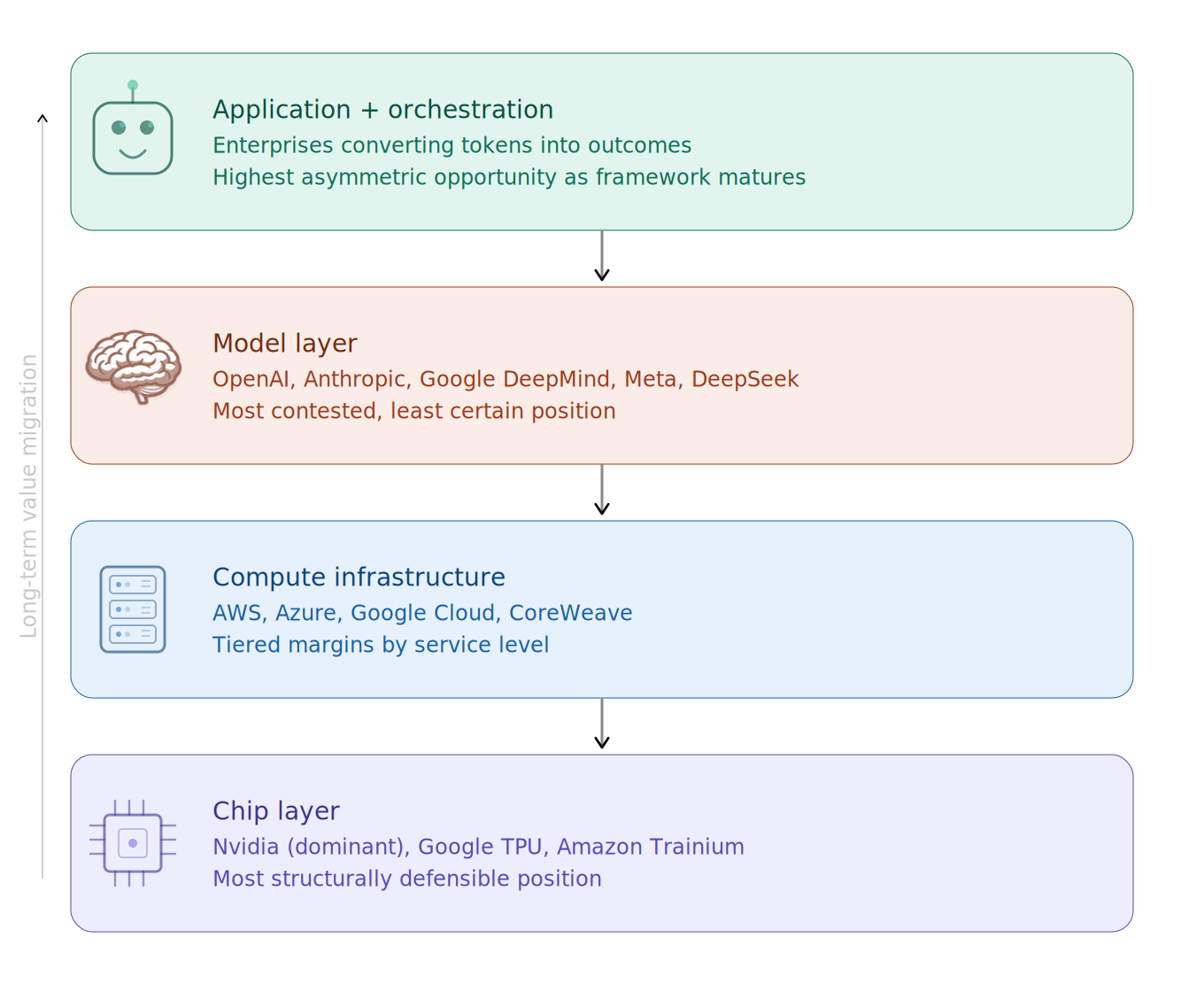

At the base sits the chip layer, dominated by Nvidia. This is the most favorable position. Every token produced anywhere, by any model, on any platform, requires silicon. Nvidia’s margins on AI accelerators are extraordinary, and each new hardware generation resets the performance frontier. As long as demand for tokens grows, Nvidia captures value regardless of which AI company “wins” downstream. The risk is that custom silicon from hyperscalers (Google’s TPUs, Amazon’s Trainium, Microsoft’s Maia) gradually erodes this dominance, but that erosion is measured in years, not quarters.

Above the chip layer sits the compute infrastructure layer: the hyperscalers and emerging “AI factory” operators like CoreWeave. These players convert chips into token-producing capacity. Their value capture depends on utilization rates and their ability to tier services: raw commodity tokens at thin margins for simple tasks, premium tokens (lower latency, larger context, higher reliability) at healthier margins for enterprise workloads. This mirrors how cloud computing matured: commodity compute at razor-thin margins, managed services at much better ones.

The model layer is where OpenAI, Anthropic, Google DeepMind, and others compete. This is the most contested and least certain position. Token costs have fallen 99% in three years as models have grown more efficient. DeepSeek demonstrated that a smaller team with lower costs can achieve near-frontier performance. Pure model capability, absent a distribution or lock-in advantage, is a commodity in formation. The model providers that survive will be those that achieve massive consumer scale (OpenAI’s play) or build deep enterprise relationships where switching costs are high (Anthropic’s play).

At the top sits the application and orchestration layer: the enterprises and platforms that consume tokens to deliver outcomes. This is where the token efficiency competition will matter most. A legal AI platform that resolves a contract review in 10,000 tokens vs. a competitor that requires 50,000 tokens has an 80% cost advantage on inference, which translates directly to margin. The winners at this layer will be those who build the tightest coupling between tokens consumed and value delivered.

Phases of the Token Economy

I see this trajectory playing out in three distinct phases, with a possible fourth.

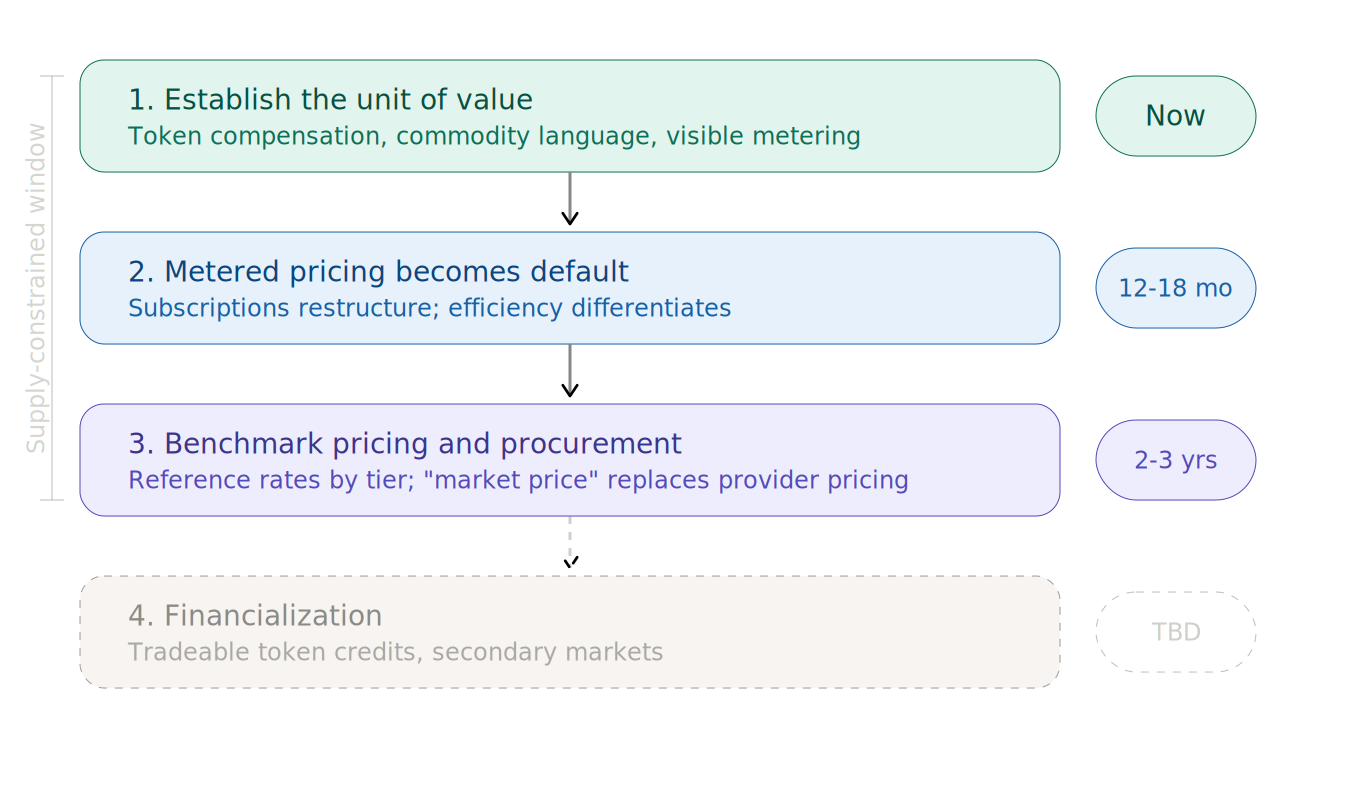

Phase 1: Establishing the unit of value. This is happening now. Jensen’s compensation scheme assigns dollar values to tokens. The “tokens are the new commodity” language gives the market a conceptual framework. This is the moment when the commodity shifts from being priced in inconsistent, provider-specific terms to being recognized as a standardized unit. The metered billing transition at OpenAI, Anthropic, and others reinforces this by making token consumption visible and quantifiable.

Phase 2: Metered pricing becomes the default. Over the next 12 to 18 months, subscription models will either disappear or restructure around token budgets with overage charges, similar to how mobile data plans evolved from unlimited to metered. This phase is where demand friction shows up, and where token efficiency becomes a competitive differentiator among providers. The cultural groundwork from Phase 1 is what allows this transition to feel natural rather than punitive.

Phase 3: Benchmark pricing and procurement. Within two to three years, some reference rate for a “standard token” becomes widely cited, probably segmented by capability tier: commodity inference, reasoning, multimodal, real-time. Token pre-purchase commitments become normal enterprise procurement, similar to how large companies buy cloud compute capacity on reserved instances today. The price discovery mechanism shifts from “what does OpenAI charge” to “what’s the market rate,” giving providers narrative cover to maintain prices above production cost, at least until supply expansion and open-source competition force further compression.

Phase 4: Financialization (provisional). There’s a plausible longer-term scenario in which token credits become tradeable instruments. Enterprises that over-purchased capacity sell excess on secondary markets. Speculators enter. The price of tokens becomes partially decoupled from the cost of production, just as oil prices are partially decoupled from extraction costs. But this depends on a degree of standardization and fungibility that doesn’t yet exist, and may not develop if the deflationary pressures described above outrun the commodity framework. It’s a scenario worth watching for, not one to build an investment thesis around today.

Can the Investment Be Recovered?

AI companies collectively plan to spend approximately $690 billion in capital expenditure in 2026. The question of whether that can be recovered is inseparable from whether a commodity pricing framework for tokens actually takes hold.

On the cost side, the trajectory is favorable. Each new generation of chips produces more tokens per watt at lower cost. Nvidia’s own roadmap assumes that the nominal value of an engineer’s $150,000 token budget stays constant while the real cost to provision it drops with each hardware generation. That’s a built-in margin expansion mechanism, and it’s a prerequisite for the downstream AI providers to eventually reach profitability.

On the demand side, the picture is less certain. Goldman Sachs found in a March 2026 analysis that AI delivers roughly 30% productivity gains on specific, targeted tasks like customer support and software development, but showed no meaningful economy-wide productivity relationship. If AI remains a tool for specific high-value applications rather than becoming a universal infrastructure layer, the total addressable market for tokens may not grow fast enough to absorb the supply coming online.

My read is that the infrastructure investment is recoverable, but not by every player. The value chain will compress. Some model providers will fail or be absorbed. Some compute buildouts will become stranded assets. The survivors will be those at structural chokepoints (Nvidia’s chip position, the hyperscalers’ distribution advantage) and those who build defensible positions in high-value application verticals where token consumption translates directly to measurable ROI.

What This Means

Jensen Huang didn’t announce an employee benefit at GTC. Whether by strategy or structural instinct, he set in motion the conditions for the AI industry to reframe how it charges for its core product. Tokens as commodity, tokens as compensation, tokens as the unit of account for a new economic layer: the effect is to normalize a pricing framework the industry desperately needs in order to close the gap between what AI costs and what users pay.

The window for establishing that framework is constrained by the same forces that make it possible. Supply tightness gives the commodity framing its credibility. When supply loosens, prices will face downward pressure regardless of what framework is in place. The companies that will have benefited most are those that used this window to lock in enterprise relationships, establish procurement norms, and build token efficiency into a durable competitive advantage.

For investors, the implications are structural. The chip layer is the most defensible position in the near term, but the long-term value migration will be toward whoever controls the relationship between token consumption and business outcomes. The model layer is a dangerous place to invest unless you have conviction about which provider achieves either dominant scale or deep enterprise lock-in. The application layer, where tokens are converted into measurable value, is where the most asymmetric opportunities will emerge as the commodity framework matures.

The token economy is being built in real time. The foundations were poured at GTC 2026.